Most MVPs aren’t

The acronym has been stretched to mean anything a founder ships in a hurry. The version that survives is mostly a marketing exercise. The version that helps is a falsifiable bet — and almost nobody writes the bet down.

A few months ago a founder showed us his MVP. It was a working web app, built in seven weeks by a freelancer he found on Workana. It had user authentication, a dashboard, three core flows, an admin panel, and a Stripe integration. It cost him R$ 38,000. He was proud of it, and he’d brought us in because he wanted help “scaling it.” We asked one question: what did the MVP teach you that you didn’t already know?

He paused. He said the customers liked it. Then he said three of them had signed up. Then he said two of those three were friends. Then we sat in silence for a minute, because we’d both arrived at the same realization at roughly the same time. The MVP hadn’t validated anything. It had built something. Those are different.

Eric Ries coined “minimum viable product” in 2008. The phrase appears in The Lean Startup with a specific, careful definition — the version of a new product which allows a team to collect the maximum amount of validated learning about customers with the least effort. Read that twice. The unit of output is not a product. The unit of output is learning. Everything else is a side-effect.

Almost nobody who uses the term in 2026 means it the way Ries did. The acronym has been stretched until it means whatever a founder ships in a hurry, and the version that wins on LinkedIn is mostly a marketing exercise — a working app, a launch tweet, a screenshot of three signups, and a post about “validating product-market fit.” This is not validation. It’s theater dressed in the vocabulary of validation.

The corruption is structural

The reason MVPs stopped working as a learning device isn’t that founders got lazy. It’s that the cost of building the artifact dropped faster than the cost of figuring out what the artifact should test. In 2008, building a working web app from scratch took six engineers and four months. The friction forced the founder to think hard about what to build, because building anything was expensive. By 2018, with no-code tools and cheap freelancers, the cost of building had collapsed. By 2024, with AI-assisted development, it collapsed again.

What hadn’t collapsed — what couldn’t collapse — was the cognitive work of deciding what to test. Defining the falsifiable claim, designing the test that would actually falsify it, choosing the metric, choosing the population, choosing the threshold: that work is the same difficulty in 2026 as it was in 2008. It’s not a tooling problem. It’s a thinking problem.

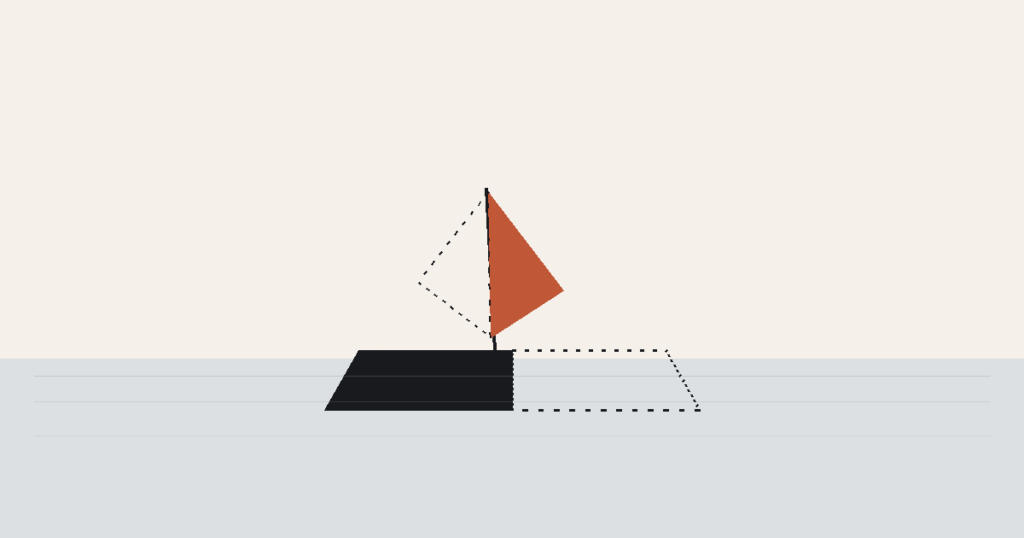

So a strange thing happened. The cost of building dropped to near zero, while the cost of deciding what to build stayed constant. The path of least resistance became: skip the thinking, start the building. Founders who would have been forced into clarity by the old economics could now skip the clarity step entirely. The MVP became a build, not a test. And the language stayed — because “MVP” still sounds like the founder is being disciplined, even when the work shows none of the discipline the term originally implied.

The result is a category of artifact we’ve come to call the theatrical MVP. It looks like an MVP. It costs what an MVP used to cost. It ships in the timeframe of an MVP. But it doesn’t tell the founder anything they didn’t already know, because no claim was specified before the build started.

The test that matters: write the bet first

The single change that converts a theatrical MVP back into a real one is brutally simple. Before any code is written, the founder writes one sentence:

“If, by [date], we have not seen [specific behavior] from [specific population], at [specific threshold], we are wrong about [specific claim].”

This sentence is not a slogan. It’s a contract. It commits the founder to a falsifiable claim and to a definition of failure. Both halves matter. Without the claim, there’s nothing to test. Without the definition of failure, there’s no learning — every outcome can be rationalized as “promising.”

Three founders we worked with last year used a version of that sentence before scoping their first build. One of them wrote: “If, within sixty days of launch, fewer than 30% of the small clinics we onboarded have logged into the dashboard at least three times in their second month, the asynchronous-care thesis is wrong and we should pivot.” Sixty days later, the number was 11%. They didn’t ship a v2. They went back and re-interviewed the clinics, found that the bottleneck wasn’t asynchronous-care interest at all, and rebuilt around an entirely different workflow. That MVP did exactly what an MVP is for. It didn’t deliver a product they could scale. It delivered a thesis they could stop building on.

The other two founders refused to write the sentence. We asked. They said they couldn’t pick a number, because they didn’t know what number was reasonable. We pointed out that not knowing what number is reasonable is the actual problem the MVP is supposed to solve. If you can’t articulate what success looks like before you start, you cannot recognize success or failure when you finish. You can only rationalize the result you got.

Why the sentence is the hard part

The reason most founders skip writing the sentence is that the sentence requires them to confront the parts of the business they’ve left vague. To write a falsifiable claim, you have to know your customer specifically enough to specify them. You have to know the behavior specifically enough to instrument it. You have to know the threshold specifically enough to defend it. None of those are technical skills. All of them are commercial discipline.

This is why the MVP problem is rarely an engineering problem. It’s a commercial-clarity problem masquerading as a build problem. The founder hires a developer because the developer can be hired. The clarity work has no obvious vendor. So the founder buys what they can buy, ships what they can ship, and the original question — what are we testing? — gets quietly dropped along the way.

A good engineering partner is the second-to-last line of defense against this. A good engineering partner refuses to start coding until the founder has answered the four questions: what claim are we testing, what behavior would falsify it, who’s the population, what’s the threshold? The last line of defense is the founder themselves. If the partner doesn’t push, and the founder doesn’t write the sentence, what gets shipped is, at best, a working application, and the founder finds out a year later that they spent forty thousand dollars learning nothing.

What an MVP is not

The point of writing all this isn’t to be precious about the term. It’s to flag a category error that costs founders runway. So, for the avoidance of doubt:

An MVP is not a small product. It can be small or large; what matters is whether it’s set up to test something. An MVP is not a launch. Launching is a separate activity, sometimes part of the test, sometimes not. An MVP is not “the thing we ship before we build the real thing.” If the thing you ship doesn’t change a real decision based on its outcome, calling it an MVP is just borrowing dignity from the original term.

It is also not the maximum thing you can build in three months. Most founders, when forced to reduce scope, reduce on the wrong axis — they cut the polish and keep the breadth. A real MVP cuts the breadth, hard, and keeps enough polish that a real customer’s behavior is observable. Eight rough features tell you less than two features done well, because the customer’s friction is the variable, not the surface area.

The version of the term we’d keep

We’ve thought about throwing the acronym away. It’s so corrupted that defending it is exhausting. But there’s one usage that still earns its keep, and we think the discipline of distinguishing it is worth more than the bother of inventing a new word.

The version that earns its keep is the smallest artifact that, when put in front of a real customer, generates a yes or no answer to the most expensive remaining assumption. That’s the test. Three things have to be true for the artifact to qualify. It has to be small enough that the build doesn’t outweigh the learning. It has to be in front of a real customer — not a friend, not a designer, not the team. And it has to produce an answer — yes or no — that changes what the founder builds next.

If your MVP doesn’t change what you build next, it isn’t one. Whatever it is, it’s not what Ries was talking about. And the question worth asking, before you write the spec, is the one our friend in São Paulo couldn’t answer: what would this teach me that I don’t already know?

If you can’t say, don’t build. The cheapest MVP is the one you don’t ship — because writing the sentence is what you actually owed the company.